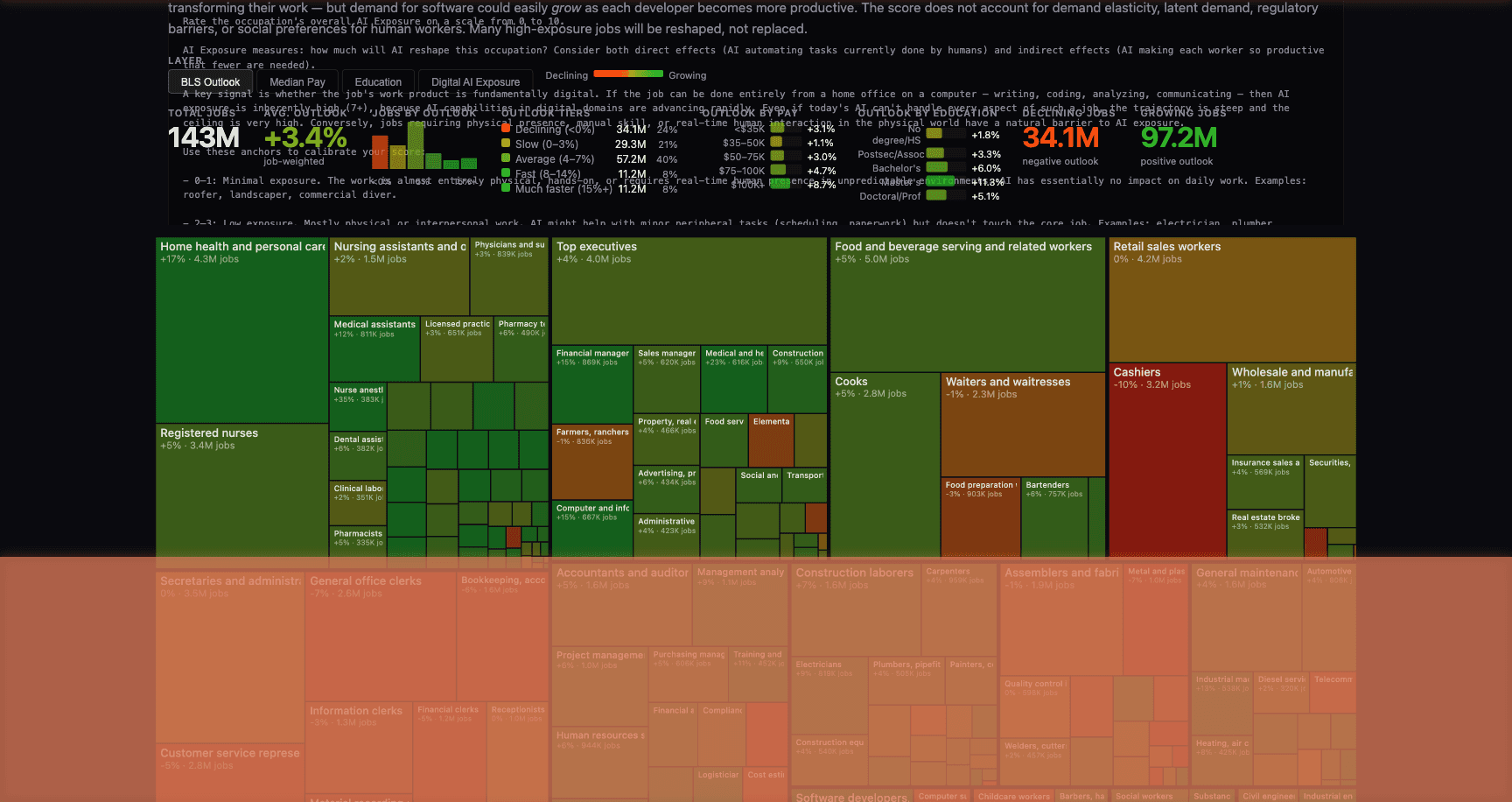

Last week, Andrej Karpathy — OpenAI co-founder, former head of AI at Tesla, and one of the most foundational figures in modern artificial intelligence — published an interactive visualization scoring every U.S. occupation on AI exposure. Using LLM-powered analysis of 342 occupations covering 143 million jobs, Karpathy’s tool maps which roles are most likely to be reshaped by AI. The picture is striking: $3.7 trillion in annual wages sit in jobs scoring 7+ out of 10 on digital AI exposure. Jobs that run on screens, documents, and information synthesis are squarely in the crosshairs.

Karpathy is no armchair commentator. He built Tesla’s Autopilot neural networks. He co-founded the organization that created GPT. When he scores an occupation on AI exposure, it’s informed by having personally built the systems that are doing the disrupting. His analysis isn’t a prediction — it’s an inventory of what’s already underway.

Karpathy’s analysis maps occupations by exposure, but the GovTech sector illustrates something his scores don’t capture directly: in government contracting, AI doesn’t just reshape jobs. It reshapes competitive advantage. The companies that synthesize more context — across RFPs, agency strategy documents, budget justifications, and prior solicitations — win more contracts. When AI can process 3,000 pages of government documents in a single session, the information asymmetry that has always defined this industry shifts fundamentally.

The Information Asymmetry Game

Government contracting runs on documents. RFPs that run 200+ pages. Past performance volumes. Technical proposals. SOWs, CDRLs, and compliance matrices. The companies that win consistently aren’t the ones with the best technical solution — they’re the ones who understood the requirement best, because they’ve been tracking it since it was a line item in a budget document 18 months before the solicitation dropped.

The incumbent knows the agency’s pain points because they’ve been delivering against the current contract. The company that shaped the requirement during pre-solicitation knows which evaluation criteria carry the most weight. Everyone else is reading the same RFP and trying to reverse-engineer what the agency actually wants from 200 pages of government prose.

The information to close that gap exists — it’s just scattered. Agency strategic plans. Previous solicitations. Congressional testimony. GAO audit reports. Budget justification documents. Industry day presentations. Sources sought notices. Each document contains signal. The challenge has always been synthesizing that signal across dozens of documents into a coherent capture strategy.

What Changes With 1M Context

Anthropic’s 1M token context window — at standard pricing for Claude Opus 4.6 and Sonnet 4.6 — turns this from a “large capture team” advantage into a “better tooling” advantage.

Capture intelligence. Load the full text of an agency’s strategic plan, the current contract’s SOW, the previous solicitation, the IT modernization roadmap, relevant GAO findings, and the program’s budget history into one session. Ask: “Where does the agency’s stated strategic direction diverge from the current contract scope?” The model synthesizes across thousands of pages to identify the gaps between current performance and future requirements — exactly the intelligence that drives effective pre-RFP shaping.

Proposal compliance. A 200-page RFP contains requirements scattered across the SOW, evaluation criteria, CDRLs, Section L/M, and compliance matrix. They reference each other. They sometimes contradict each other. Missing a cross-reference is how you get a technically brilliant proposal that scores poorly because it didn’t address an evaluation subfactor buried in Section M that references a requirement in Appendix C.

Load the entire RFP, your compliance matrix, your technical approach, and your past performance volumes into one session. The model cross-references every requirement against your response, identifies gaps, flags contradictions, and verifies that every evaluation criterion is explicitly addressed.

Competitive landscape. Load publicly available contract data — FPDS records, USASpending data, award announcements, protest decisions, and incumbents’ public past performance — into one session. Build a competitive analysis that identifies strengths, likely weaknesses, and the evaluation criteria where your team has the strongest differentiators.

Compliance framework mapping. Government contracts layer compliance requirements — FedRAMP, FISMA, Section 508, CMMC, supply chain risk management. Each is a lengthy document. Load every applicable framework alongside your technical approach and identify the compliance gaps and framework conflicts in one pass.

Post-award management. Load the full contract — base period SOW, all option year modifications, QASP, CLINs, CDRLs, and COR correspondence — into one session. Identify deliverables coming due, flag where modifications have created scope ambiguity, and trace any requirement back through its modification history.

Why This Levels the Playing Field

Karpathy’s data shows that knowledge work built on document synthesis scores highest on AI exposure. Government contracting is, at its core, entirely document synthesis — from capture through performance.

The structural advantage has always belonged to large primes with deep capture teams who could dedicate analysts to tracking opportunities for months before the solicitation. Smaller competitors with better technical approaches lost because they couldn’t match the intelligence-gathering infrastructure.

At standard pricing, loading an agency’s entire publicly available program documentation into a session costs a few dollars. The capture intelligence that comes back would take an analyst team days to produce. SAM.gov buried your best opportunity on page 12. The budget justification that explains the re-compete was published nine months ago. The information was always available. The question was always whether you could synthesize it fast enough.

That question just got a very different answer.

We build AI platforms for GovTech operations — capture intelligence, proposal management, compliance mapping — for organizations that compete on information advantage. If you’re evaluating what this means for your government contracting pipeline, start a conversation.